NVIDIA's AI Server Dominance Under Threat: Custom Chips & Rivals Reshape the Landscape

NVIDIA has long been synonymous with the meteoric rise of artificial intelligence, etching its name as the undisputed leader in the AI server market. Its Graphics Processing Units (GPGPUs) have powered everything from groundbreaking research to the most sophisticated enterprise AI applications, making it Wall Street's darling and a driving force behind the tech sector's recent surges. Yet, beneath this seemingly impregnable fortress, tectonic shifts are underway. A convergence of factors – from the world’s largest tech giants developing their own custom AI accelerators to intensifying market competition and evolving customer demands – is challenging NVIDIA's reign, signaling a dynamic new era for AI infrastructure.

The journey from traditional compute to specialized AI servers marked a pivotal shift in the industry. NVIDIA, through its powerful GPUs and the ubiquitous CUDA platform, played a crucial role in delivering the immense processing capabilities required for complex AI tasks. Simultaneously, cloud service providers (CSPs) like Amazon Web Services (AWS), Microsoft Azure, and Google Cloud rapidly adopted and offered this AI-specific infrastructure, making advanced AI capabilities more accessible to businesses of all sizes. This widespread availability, coupled with the proliferation of AI applications across healthcare, finance, automotive, and manufacturing, has fueled an insatiable demand for high-performance AI servers.

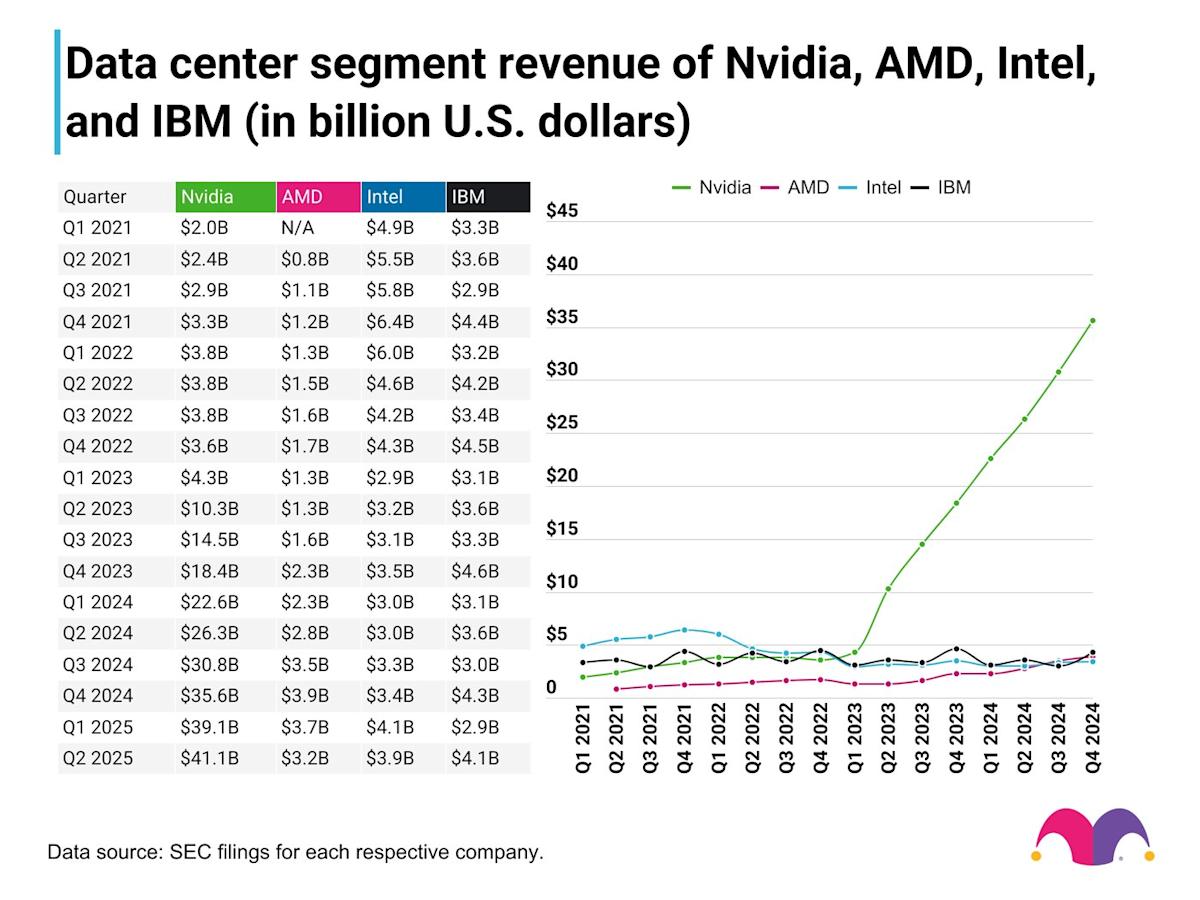

The Unquestioned Reign of NVIDIA in the AI Server Market

For years, NVIDIA's GPGPUs have been the accelerator of choice, forming the very backbone of AI servers globally. Major Cloud Service Providers (CSPs) such as Amazon, Google, and Microsoft have consistently been among NVIDIA’s largest customers. They leverage NVIDIA's powerful GPUs not only to support their vast internal AI workloads but also to host NVIDIA's premium DGX Cloud service, bringing cutting-edge AI capabilities directly to their own data centers. This symbiotic relationship solidified NVIDIA's position, establishing its hardware as the industry standard.

Beyond the raw processing power of its GPGPUs, NVIDIA's unparalleled dominance stems from its comprehensive AI stack. The CUDA ecosystem, with its rich developer resources, extensive libraries, and robust prebuilt applications, offers unparalleled programming flexibility and ease of use. This end-to-end solution provides a significant barrier to entry for competitors, creating a highly sticky environment for developers and enterprises alike. For many organizations, the investment in NVIDIA's ecosystem, training, and existing software infrastructure makes switching to alternative platforms a daunting and costly proposition, reinforcing NVIDIA's near-monopoly in the AI acceleration space.

The Rise of Custom AI Accelerators: Tech Giants Strike Back

Despite their deep reliance on NVIDIA, the very CSPs that fueled its rise are now leading the charge to reduce their dependence. Amazon, Google, and Microsoft, historically home to some of the most active CUDA developer communities, have poured significant capital into developing and deploying their own custom AI accelerators. These initiatives are not merely about competition; they are strategic moves driven by several critical factors:

- Cost Reduction: At the colossal scale at which these tech giants operate, even minor cost savings per chip can translate into billions of dollars annually. Custom ASICs (Application-Specific Integrated Circuits) designed precisely for their unique workloads can offer significant total cost of ownership (TCO) savings over general-purpose GPUs.

- Performance Optimization: While NVIDIA's GPGPUs are versatile, custom chips can be meticulously engineered for specific AI tasks and inference patterns prevalent in the hyperscalers' environments. This allows for superior performance, energy efficiency, and latency improvements tailored to their needs.

- Mitigating Vendor Lock-in: The fear of being solely dependent on a single vendor is a powerful motivator. Developing in-house solutions provides greater control over supply chains, future roadmaps, and pricing, reducing the risk associated with a near-monopoly provider.

- Strategic Advantage: Owning the underlying hardware gives these companies a competitive edge in delivering specialized AI services and products.

Beyond the major CSPs, other tech titans are also making significant plays. Meta has heavily invested in custom AI accelerators to efficiently train its Llama family of large language models, while Apple has developed servers powered by its M-Series chips to underpin the cloud capabilities of Apple Intelligence. This trend underscores a broader movement where companies with immense capital and specific, high-volume AI workloads are opting for bespoke silicon to gain a strategic advantage. You can delve deeper into this phenomenon by reading our related article: Custom AI Accelerators: Tech Giants Challenge NVIDIA's Reign.

Navigating the Complexities: Why Custom Isn't for Everyone

While the allure of custom AI chips is strong for the tech giants, the reality is that such investments remain exclusive to a select few. The development of custom silicon is an incredibly capital-intensive endeavor, requiring billions of dollars in R&D, specialized engineering talent, and complex manufacturing partnerships. Fabless semiconductor companies like Broadcom and Marvell are increasingly offering custom AI silicon design services, yet the barrier to entry remains prohibitively high.

Only a small subset of the world's largest companies engage in operations at such an immense scale that the significant upfront investment in custom ASICs would yield measurable returns on investment and substantial TCO savings. For the vast majority of enterprises, startups, and even mid-sized tech companies, the cost, complexity, and risk associated with custom chip development are simply too high.

Practical Tip: For businesses without hyperscale operations, attempting to build custom AI hardware is usually an inefficient use of resources. Focus instead on optimizing your AI models for existing, widely available, and well-supported GPGPUs from NVIDIA, AMD, or leveraging the diverse offerings of cloud providers. The flexibility, rich developer ecosystem, and mature software stacks of general-purpose solutions still offer the best value and fastest time-to-market for most applications.

This reality ensures that despite the aggressive push from the largest players, the broader market will continue to largely depend on NVIDIA's GPGPUs due to their programming flexibility, the robustness of the CUDA ecosystem, and the sheer breadth of prebuilt applications and developer resources available.

NVIDIA's Strategic Maneuvers and the Evolving Competitive Landscape

NVIDIA's position as a market leader comes with intense scrutiny. As Wall Street’s darling, its business decisions and quarterly results are meticulously analyzed by investors, compelling the company to navigate a fine line between maximizing profitability and shareholder returns while fostering innovation and maintaining market share. Despite some erosion in operating profitability, NVIDIA's commitment to innovation remains clear, evidenced by a staggering 48.9% year-to-year increase in R&D spend during 2024. This aggressive investment signifies NVIDIA’s intent to stay ahead of the curve, continuously pushing the boundaries of AI hardware and software.

However, this investor scrutiny may also be a contributing factor to NVIDIA's aggressive pricing tactics. While pricing power is a luxury afforded by its first-mover advantage and near-monopoly in the GPGPU market, high margins inevitably attract competitors and drive customers to explore alternatives. The landscape is increasingly populated by "frenemies" – CSPs who are both NVIDIA's biggest customers and now its most formidable competitors in the custom silicon space.

External factors also contribute to this evolving scenario. NVIDIA, while positioning itself as a partner-centric AI ecosystem enabler, faces a growing number of direct and indirect competitors. From other traditional GPU manufacturers like AMD to specialized AI accelerator startups and, of course, the in-house efforts of the tech giants, the AI server market is becoming increasingly fragmented and competitive. NVIDIA must continue to innovate rapidly, expand its software offerings, and potentially adjust its pricing strategies to retain its leadership amidst this rising tide of competition and customer exploration of alternatives.

Conclusion

NVIDIA's dominance in the AI server market is undoubtedly facing its most significant challenge yet. The strategic pivot by hyperscalers and tech giants towards custom AI accelerators marks a critical inflection point, driven by the compelling needs for cost efficiency, workload optimization, and freedom from vendor lock-in. While this trend represents a formidable threat to NVIDIA's long-term monopoly in certain segments, the company's robust GPGPU technology, comprehensive CUDA ecosystem, and aggressive R&D investments position it to remain a central player.

The future of the AI server market will likely be a more diverse and competitive landscape. NVIDIA will continue to be a powerhouse, particularly for the vast majority of enterprises lacking the resources for custom silicon. However, the emergence of bespoke chips tailored for specific, large-scale deployments will push NVIDIA to innovate faster, potentially refine its pricing, and evolve its partnerships. Ultimately, this increased competition and diversification will benefit the broader AI industry, fostering greater innovation and offering a wider array of high-performance solutions for the ever-expanding demands of artificial intelligence.